Project Overview

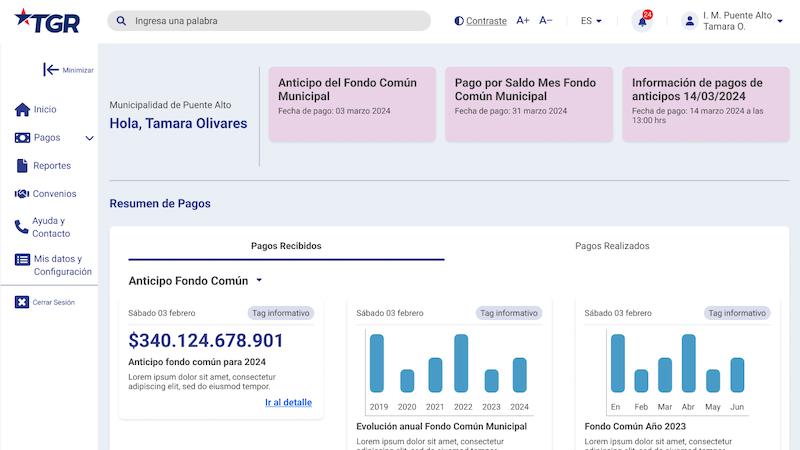

Between 2019 and 2022, I led two comprehensive rounds of web and mobile quality studies commissioned by Chile's Superintendencia de Pensiones — the government regulator overseeing the country's pension system. The goal was to evaluate the digital platforms of every active pension fund administrator (AFP) in Chile and establish an official industry benchmark.

Chile's pension system is mandatory for all formal workers, making AFP platforms essential services used by millions. The regulator needed an objective, standardized evaluation framework to assess and compare quality across the industry — and to hold administrators accountable for improving their digital experiences.

AFPs Evaluated

- AFP Provida (MetLife)

- AFP Cuprum (Principal Financial Group)

- AFP PlanVital (Generali Group)

- AFP Habitat

- AFP Capital

- AFP Modelo

- AFP UNO (added in Round 2)

Study Rounds

Round 1 (2019–2020) — 6 AFPs

The first round established the baseline. We evaluated 6 pension fund administrators through heuristic analysis, cognitive walkthroughs, and moderated usability testing. Each AFP received a two-part detailed report, a usability test report with prioritized recommendations, and a final stakeholder presentation. A consolidated industry report was delivered to the Superintendencia.

Deliverables per AFP: Heuristic evaluation, cognitive walkthrough with screen recordings, usability test sessions, two-part final report, usability test report with recommendations, final presentation.

Industry deliverables: Consolidated benchmark report, best practices document, and formal presentation to the Superintendencia de Pensiones.

Round 2 (2021–2022) — 7 AFPs

The second round expanded the evaluation to include AFP UNO and deepened the analysis. 88 moderated user test sessions were recorded across all 7 AFPs (81 completed), with detailed flow analysis per administrator. We held 11 formal progress meetings and training sessions with the regulator between October 2021 and April 2022, including methodology transfer to help the Superintendencia's team interpret and use the benchmark results independently.

Deliverables per AFP: Cognitive walkthrough report, usability test sessions with video recordings, detailed quality report, final presentation, summary video.

Industry deliverables: "Indicador de Calidad Web" (Web Quality Indicator) — the official industry benchmark report, conclusions presentation, and a training session for the regulator's staff.

Methodology

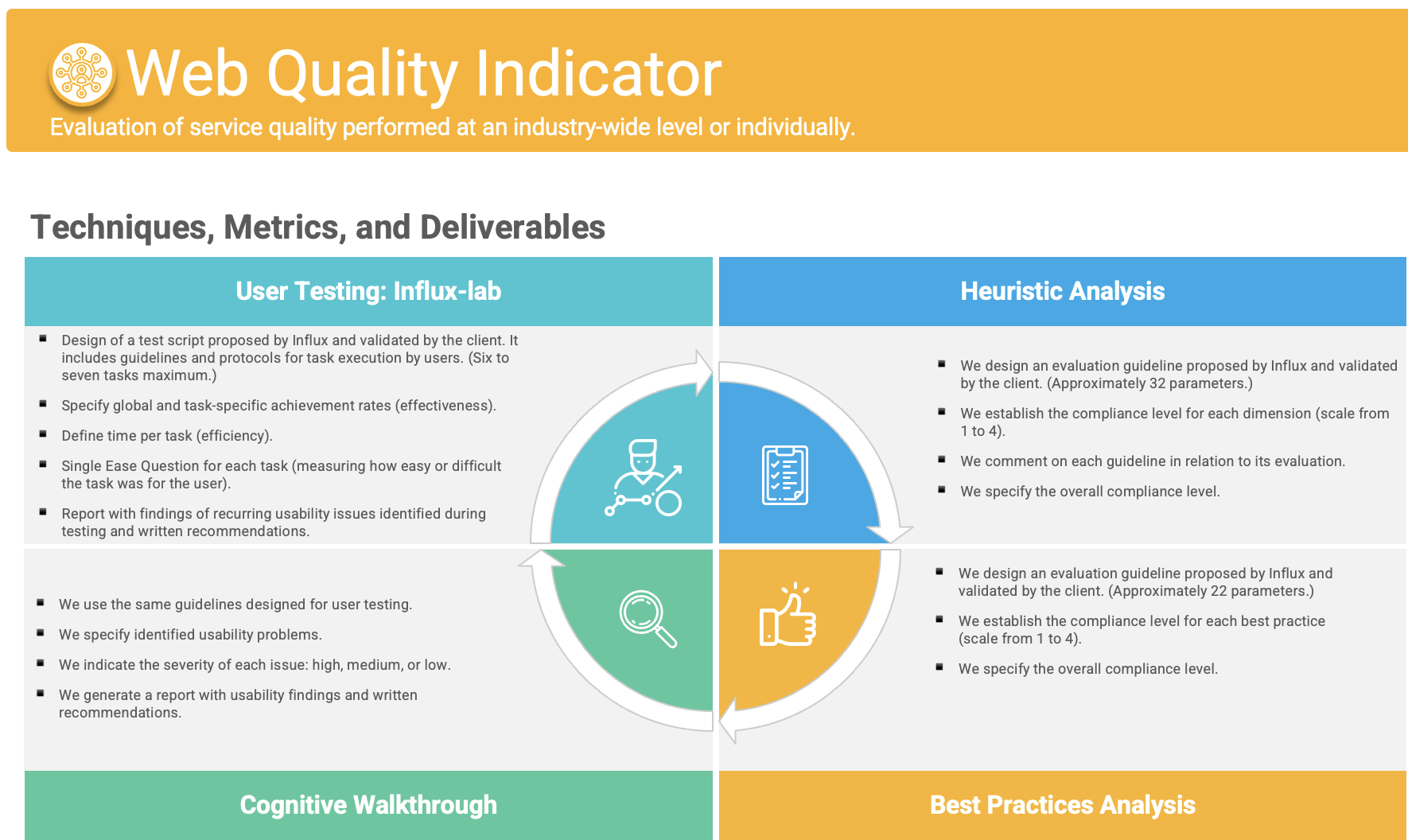

We developed a comprehensive evaluation framework that combined quantitative measurements with qualitative insights, applied consistently across all AFPs and both rounds to enable meaningful comparison:

Expert Evaluation

- Heuristic Analysis: Assessment against standardized usability principles — 32 heuristics plus 22 best-practice criteria in Round 1, expanded to a 41-principle framework in Round 2

- Cognitive Walkthrough: Expert simulation of user behavior on critical tasks, documented with screen recordings

- Accessibility Evaluation: Compliance analysis against international WCAG standards

- Flow Documentation: Detailed mapping of user flows per AFP for each critical task

User Testing

- Task-Based Usability Testing: 10-15 moderated sessions per AFP, testing seven critical tasks:

- Access password recovery

- Security key recovery

- Security key request

- Fund switching

- Fund distribution

- Viewing account balance

- Initiating pension application

- Metrics Collection: Success rate, task completion time, perceived ease of use

- Post-Task Surveys: Satisfaction and recommendation likelihood

- Video Recording: All sessions recorded for evidence-based reporting

Web Quality Indicator

We developed a proprietary Indicador de Calidad de Servicio Web (Web Quality Service Indicator) that combined five dimensions into a single comparable score:

- Technical quality (from expert evaluations)

- Perceived quality (from user testing)

- User efficiency (time to complete tasks)

- User effectiveness (task success rates)

- Satisfaction (post-task rating)

This indicator became the official benchmark used by the Superintendencia to compare and track quality across Chile's entire pension fund industry.

Scale and Deliverables

Across both rounds, this project produced:

- ~140 moderated user test sessions across both rounds — 10 per AFP in Round 1 (~60 total) and 88 recorded sessions in Round 2 (81 completed), all with video documentation

- 65+ deliverables: detailed per-AFP reports, cognitive walkthrough reports, usability test reports, presentations, and summary videos

- 2 industry benchmark reports for the Superintendencia de Pensiones

- 11 formal meetings and training sessions with the regulator during Round 2 (October 2021 – April 2022)

- Training sessions to transfer methodology knowledge to the Superintendencia's team

- Best practices document establishing industry standards

- Per-AFP finding summaries used for intermediate stakeholder meetings

Key Findings

Our research revealed consistent patterns across the industry:

- Authentication Confusion: Users consistently struggled to differentiate between access passwords and security keys, making credential recovery one of the most error-prone flows across platforms.

- Fund Management Complexity: Processes for switching and distributing retirement funds used inconsistent interaction models, with success rates varying widely across AFPs.

- Pension Application Barriers: Initiating the pension application was the most problematic task industry-wide, with roughly half of users unable to complete it successfully (49% average success rate).

- Mobile Experience Gaps: Tasks on mobile devices showed 15–20% lower success rates compared to desktop, particularly for complex operations.

- Accessibility Shortcomings: Industry-average accessibility compliance was below 45%, with contrast and screen reader compatibility as the most common failures.

- Inconsistent Design Patterns: Many AFPs had different visual and interaction designs for different sections of their platforms, breaking user expectations when navigation patterns changed.

Solutions and Impact

For each AFP, we developed tailored improvement roadmaps with prioritized recommendations:

- Authentication Simplification — Clearer credential management, contextual help, and streamlined recovery paths

- Task Redesign — Standardized interaction patterns for complex tasks, simplified multi-step processes

- Information Architecture — Navigation aligned with user mental models, consistent terminology and progressive disclosure

- Mobile Optimization — Adapted critical flows for small screens with touch-friendly interfaces

- Accessibility Enhancements — Higher contrast ratios, screen reader compatibility, and alternative navigation

Longitudinal Impact

By comparing Round 1 and Round 2 results, we observed measurable industry-wide improvements:

- Web Quality Indicator industry average rose from 6.74 to 7.96 (out of 10)

- Heuristic compliance improved from 77.2% to 78.1% across platforms

- Average task completion times decreased and user-reported satisfaction increased

- The performance gap between the highest and lowest-scoring AFPs narrowed significantly

The competitive benchmarking approach proved especially effective — AFPs that could see how they compared to their peers were more motivated to implement improvements.

Lessons Learned

- Regulator as Client: Working for the regulator gave the study authority that individual AFP engagements would not have had. The benchmark created healthy competition and accountability.

- Longitudinal Value: Two rounds of evaluation revealed how improvements in one area sometimes created unexpected issues in others, and allowed us to track whether recommendations were actually implemented.

- Mixed Methodology: Combining expert evaluation with user testing provided a comprehensive view that neither approach could deliver alone. The cognitive walkthroughs predicted many of the issues later confirmed by user testing.

- Knowledge Transfer: Training the regulator's team to interpret benchmark results ensured the framework would outlast our direct involvement.

- Standardized Tasks: Using the same task set across all platforms enabled meaningful cross-AFP comparison and identification of best practices.